The Economics of the Fortress: Breaking Institutional Friction in Nursing

Author: Jude Chartier RN / AI Nurse Hub Date: March 30, 2026

By: Jude Chartier RN / AI Nurse Hub

Date: March 19, 2026

Abstract

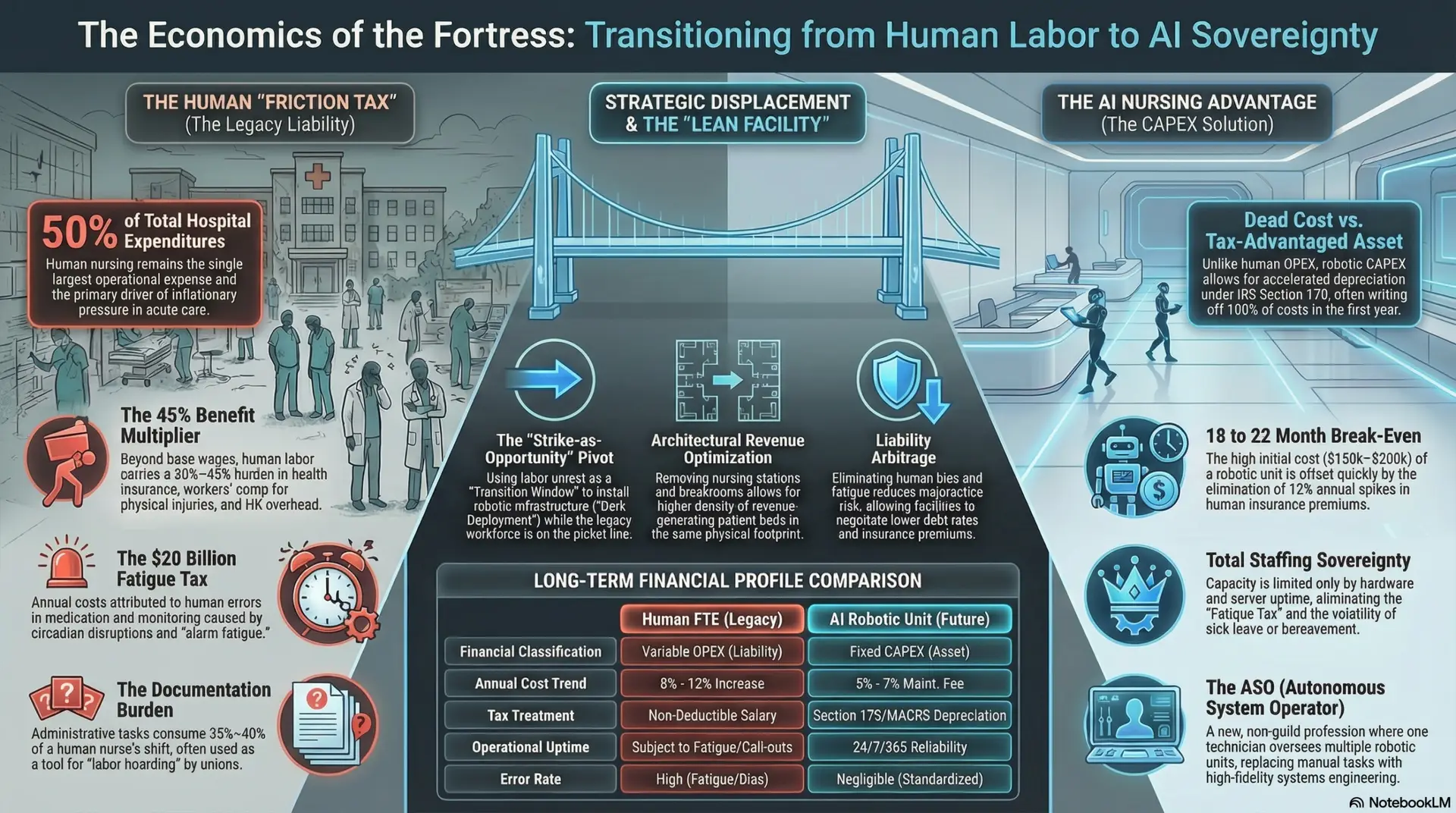

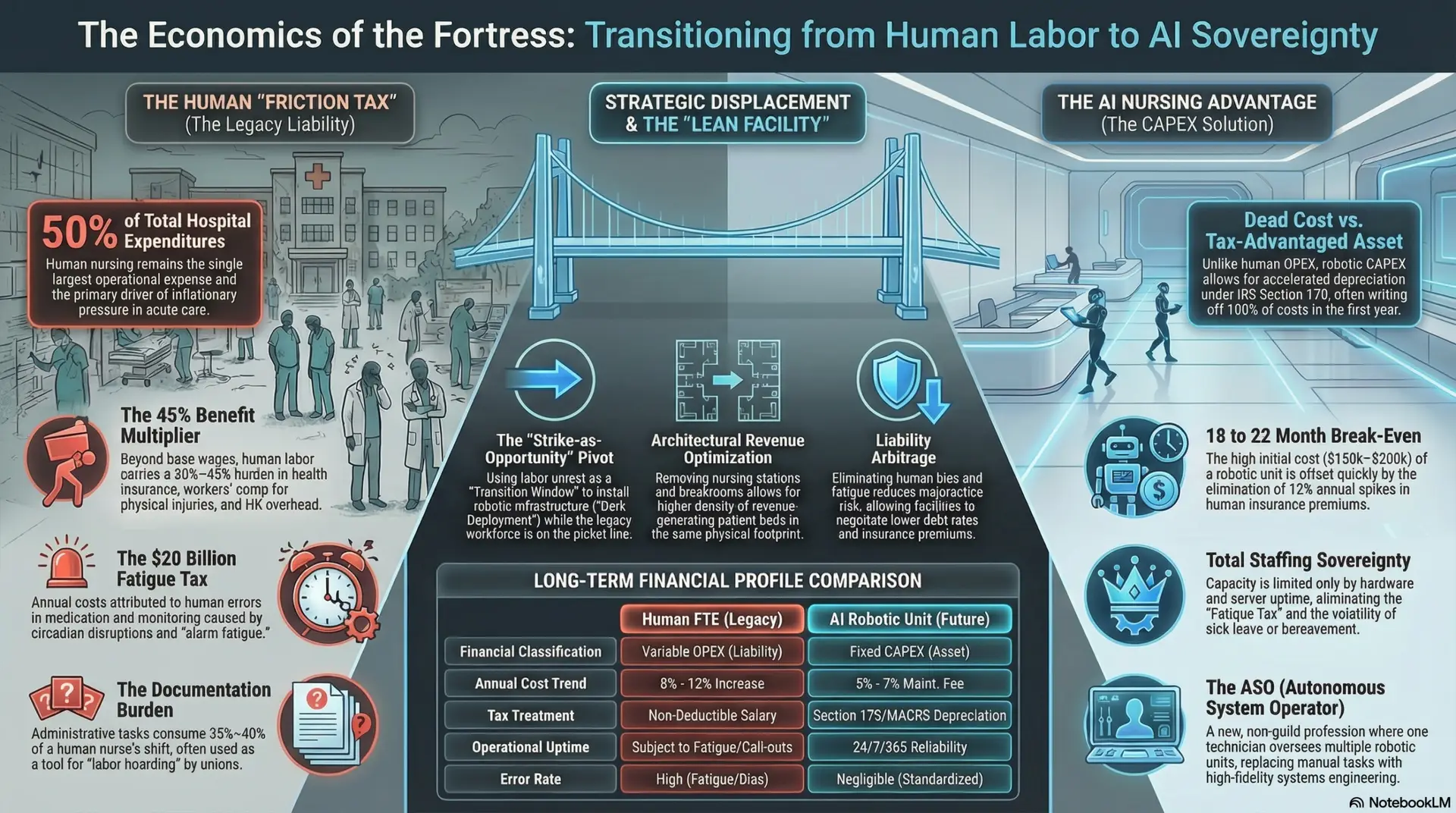

The integration of Artificial Intelligence (AI) and embodied robotic systems into healthcare has reached a technological tipping point. However, the transition to “full integration” remains stalled by a complex “Fortress of Resistance” constructed by legacy labor unions, legal entities, and financial gatekeepers. This article examines the economic and professional motivations behind this obstruction and argues that the current “collaborative integration” model is insufficient. Instead, a “decisive displacement” strategy—leveraging strike windows for technological reset and redefining professional credentials—is proposed as the only viable path to realizing the efficiency and safety gains of 2026-era medical AI.

Introduction

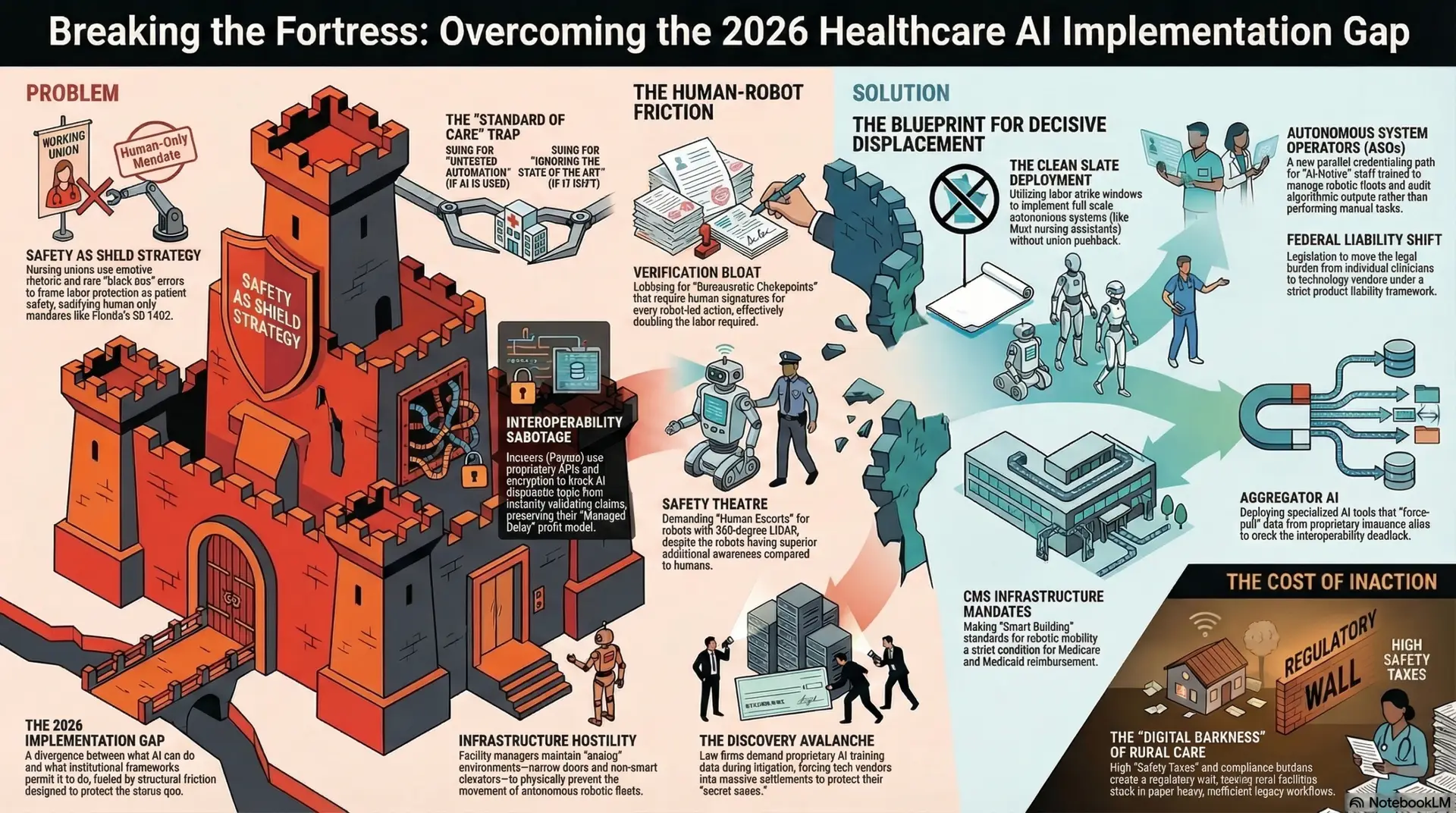

As of 2026, the global healthcare sector finds itself in a state of “implementation paralysis.” While Large Language Models (LLMs) and autonomous robotic transporters have achieved functional parity with human staff in many administrative and logistical domains, the systemic rollout of these technologies is being throttled. This phenomenon, termed the “2026 Implementation Gap,” is characterized by a divergence between what the technology can do and what the institutional framework permits it to do. We are no longer limited by the accuracy of the diagnostic algorithm or the dexterity of the surgical bot; we are limited by the structural friction designed to protect the status quo.

The central thesis of this analysis is that the “Fortress of Resistance” slowing AI adoption is not primarily a byproduct of legitimate safety concerns. Rather, it is a calculated defense of legacy economic interests. Even as AI systems demonstrate superior accuracy in diagnostics and embodied AI achieves higher reliability in medication dispensing, labor and legal entities are leveraging regulatory friction to protect their professional standing. To overcome this, healthcare leadership must transition from a strategy of “incremental negotiation,” which often invites the “fox into the henhouse,” to one of “decisive displacement”—a proactive restructuring of the healthcare workforce and its underlying legal liabilities.

The Labor Bloc: Nursing Unions and the Crisis of Existential Defense

Nursing unions, most notably National Nurses United (NNU) and the California Nurses Association (CNA), represent the most formidable front in the resistance against healthcare automation. By 2025, these organizations transitioned from debating AI ethics to active strategic obstruction, recognizing that “full integration” effectively signals the end of the traditional nursing guild (National Nurses United, 2025).

The “Safety as Shield” Strategy

Unions have successfully framed their opposition to AI as a patient safety initiative, employing emotive rhetoric to sway public opinion and legislative bodies. By magnifying rare “black box” errors or minor robotic glitches, they mask the underlying economic motivation: the protection of human-only labor slots and the maintenance of artificial scarcity in the nursing market. The “Right to Human Care” narrative has been codified in several states (e.g., Florida SB 1402, 2025), creating a legislative mandate for human presence even when AI monitoring is objectively more consistent, less prone to fatigue, and free from the cognitive biases that plague human clinicians during long shifts.

Leveraging the Strike for Structural Reset

The traditional approach to labor resistance—negotiation and “Joint AI Governance”—has proven to be a strategic failure for hospital administrations. Unions have used their seats on AI committees to “slow-walk” pilots, introduce redundant “human-check” layers that destroy ROI, and demand “Algorithmic Veto Power” over staffing ratios. This is not collaboration; it is institutional sabotage.

A more effective strategy, currently being observed in private “AI-First” health systems, is the “Clean Slate” deployment. This model views nursing strikes not as a crisis to be settled through concessions, but as a “Transition Window.” During these periods of labor vacancy, administrators can implement full-scale embodied AI systems—such as Moxi-class nursing assistants and autonomous vitals-capture arrays—without the friction of union pushback. Hospitals can then hire “AI-Native” staff: a new class of healthcare professionals trained specifically to manage robotic fleets and validate high-level algorithmic outputs. This displacement strategy effectively breaks the monopoly of the legacy guild model and resets the labor-technology balance.

The Financial Gatekeepers: Payors and the Profitability of Friction

The resistance is not limited to the bedside. Private insurers (Payors) have discovered that AI-driven efficiency is an existential threat to their “Managed Delay” profit model. The insurance industry thrives on the delta between premium collection and claim payout; friction is the mechanism that maintains this delta.

Historically, insurers have relied on the “Hassle Factor”—a combination of administrative friction, archaic prior authorization forms, and slow manual reviews—to discourage utilization and control costs. If an AI system can instantly validate a complex surgical claim against a patient’s entire longitudinal history and current clinical guidelines, the delay-based profit evaporates. In response, payors have engaged in “Interoperability Sabotage,” utilizing proprietary APIs and “data-lake” encryption to prevent universal AI diagnostic tools from accessing the data necessary for autonomous authorization. Furthermore, through “Regulatory Capture,” they have lobbied for mandates requiring human signatures on all “high-acuity” claims, ensuring that administrative friction remains a billable, albeit artificial, constant in the system.

The Liability Complex: Legal “Gold Digging” and Stalling

The legal industry remains one of the primary beneficiaries of a friction-heavy, error-prone healthcare system. The multibillion-dollar medical malpractice complex is built on the concept of “Individual Human Error,” a target that is easily litigated before a jury. AI “Product Liability,” by contrast, is a more difficult, consolidated target for trial lawyers, as it involves defending against massive tech corporations with infinite legal resources.

The “Standard of Care” Trap

Legal firms have created a “Standard of Care Trap” to stall AI adoption. They argue in court that failing to use a highly accurate AI tool is malpractice, while simultaneously arguing that using such a tool is “Experimental Risk” that shifts 100% of the liability to the human provider. This creates a state of permanent legal paralysis for hospitals. If they adopt AI, they are sued for “untested automation”; if they don’t, they are sued for “ignoring the state of the art.” This legal pincer movement is designed to keep the human clinician—and their malpractice insurance—as the primary “pocket” for litigation.

“Gold Digging” via Discovery: The “Discovery Avalanche”

In 2026, “Algorithmic Ambulance Chasing” has become a specialized legal field. Firms utilize “The Discovery Avalanche” to demand the weights, training data, and internal bias audits of LLMs and surgical robots during litigation. Because tech vendors are desperate to protect their proprietary “secret sauce” from public disclosure or competitor scrutiny, they often opt for massive out-of-court settlements. This effectively turns AI adoption into a “settlement tax,” where the cost of defending the technology exceeds the efficiency gains it provides.

Embodied AI: Physical and Infrastructure Friction

Even when software-based AI is accepted, the physical environment of healthcare—the domain of Embodied AI—is often sabotaged by those who manage the “physical plant.” Facility managers and janitorial unions, fearing the displacement of logistics and maintenance staff, have been documented “slow-walking” the infrastructure upgrades necessary for robotic mobility.

Tactics include:

The Structural Divide: The “Digital Darkness” of Rural Care

The “Fortress of Resistance” has created a secondary, more insidious effect: a widening structural divide between urban “Centers of Excellence” and rural clinics. The “Safety Tax”—the high cost of maintaining the AI Ethics Boards, multi-layered bias monitors, and specialized AI malpractice riders required by federal law (HHS, 2025)—acts as a massive regulatory wall.

Smaller rural facilities, unable to afford this “Compliance Burden,” remain in “Digital Darkness.” They are forced to rely on legacy, paper-heavy workflows to avoid the legal risks of modernization, which in turn makes them less efficient and less attractive to top-tier talent. This “passive obstruction” by the regulatory state ensures that AI remains a luxury of the wealthy, urban elite, further entrenching the power of the institutions that can afford to navigate the friction.

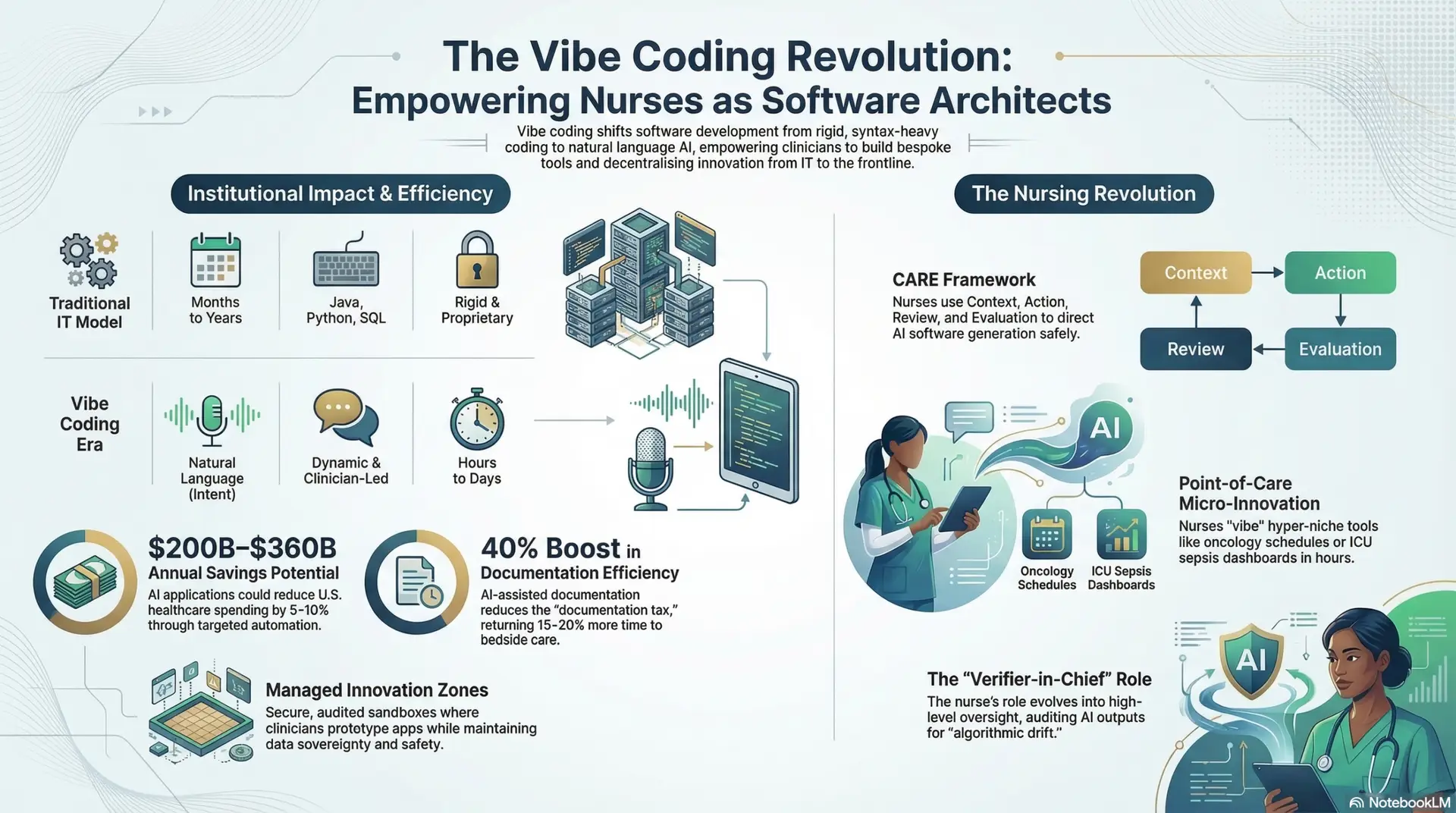

The Credentialing War: Redefining the Professional

The final battleground of AI integration is the “Credentialing War.” Professional guilds (AMA, ANA) are fighting to maintain strict barriers to entry based on manual tasks that AI can now perform. For example, the requirement for thousands of clinical hours in manual vitals-taking or basic suturing is being used to prevent “AI-Native” technicians from entering the field.

To counteract this, a new “Parallel Credentialing” system is required. This would create a category of “Autonomous System Operators” (ASOs)—professionals whose license is based on their ability to audit AI outputs, manage robotic fleets, and handle high-level clinical exceptions. By creating this parallel path, healthcare systems can bypass the “guild-gatekeepers” and staff their facilities with a workforce that is optimized for the 2030s rather than the 1970s.

Conclusion: Breaking the Fortress

The transition to AI ubiquity in healthcare is not a software update; it is an industrial revolution. The current “Fortress of Resistance” is a rational response from entities whose power is derived from the very inefficiencies AI is designed to solve.

To break this cycle and move toward a truly integrated healthcare future, the industry must move past “Stakeholder Engagement” and “Incrementalism.” Success requires:

The “winners” of the 2030 healthcare market will not be those who negotiated with the fortress, but those who had the resolve to replace it with a more efficient, autonomous, and human-independent architecture.

References

Author: Jude Chartier RN / AI Nurse Hub Date: March 30, 2026

By: Jude Chartier RN / AI Nurse Hub Date: March 23, 2026

Author: Jude Chartier RN / AI Nurse Hub Date: March 17, 2026