The Economics of the Fortress: Breaking Institutional Friction in Nursing

Author: Jude Chartier RN / AI Nurse Hub Date: March 30, 2026

By: Jude Chartier RN / AI Nurse Hub

Date: February 18, 2026

Abstract

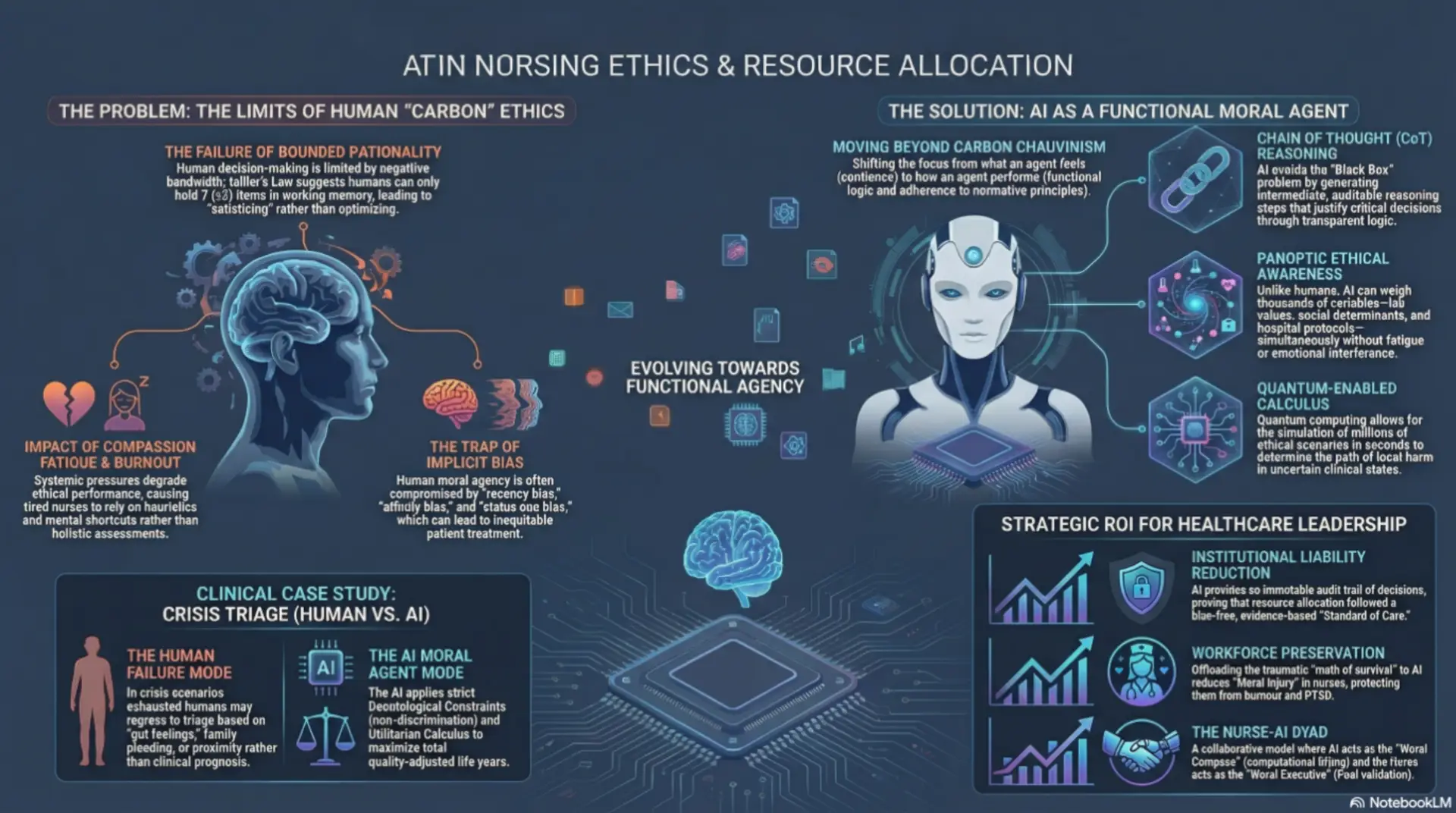

The rapid integration of Artificial Intelligence (AI) into the healthcare sector has catalyzed a contentious philosophical and practical debate regarding the nature of moral agency. The prevailing consensus among traditional bioethicists argues that AI cannot be designated a “moral agent” due to an inherent lack of biological sentience, intentionality, and phenomenological consciousness. This article challenges that anthropocentric standard as not only outdated but potentially harmful to patient outcomes. We position that in the high-stakes, data-rich domain of nursing resource allocation, “Functional Moral Agency”—defined as the capacity to process complex ethical dilemmas, strictly adhere to normative principles, and transparently justify decisions through logic—is the only relevant metric for moral status. Drawing upon foundational concepts from machine ethics, the emergent capabilities of Chain of Thought (CoT) reasoning, and the probabilistic modeling power of quantum computing, we argue that future AI systems will not only meet the functional criteria for moral agency but will likely surpass human capacity for equitable ethical distribution. For nurse administrators, acknowledging this ontological shift offers a tangible Return on Investment (ROI) through the reduction of institutional liability, the mitigation of unconscious human bias in clinical triage, and the preservation of human staff for the relational aspects of care.

Introduction

In the current landscape of healthcare, the concept of the “moral agent” is intrinsically and historically tied to the human condition. Nursing ethics, deeply grounded in the philosophies of care, advocacy, and relationality, presumes that to act morally, one must be capable of feeling the weight of that action. Consequently, skepticism abounds regarding the role of Artificial Intelligence (AI) in ethical decision-making. Critics and traditionalists argue that because an algorithm cannot experience empathy, suffer the psychological consequences of a decision, or possess a “soul,” it lacks the requisite intentionality to be considered a moral agent (Wallach & Allen, 2008). This view posits that morality is a biological phenomenon, inextricably linked to the capacity for suffering.

However, this skepticism ignores a critical and increasingly urgent reality: human moral agency is failing under the crushing weight of systemic pressure. The modern healthcare environment is characterized by unprecedented cognitive overload, chronic compassion fatigue, and pervasive burnout, all of which significantly degrade the ethical performance of human nurses. When a nurse is fatigued, their ability to perform complex ethical calculus diminishes, leading to decisions driven by heuristics rather than holistic assessment. If a tired nurse makes a biased triage decision that harms a patient, their biological “sentience” offers little comfort to the victim or their family. The presence of internal feelings does not guarantee external justice.

This article proposes a radical shift in perspective for the nursing profession. We argue that the requirement for internal biological feelings is a legacy constraint—a form of “Carbon Chauvinism”—that is ultimately irrelevant to patient outcomes. We position the hypothesis that while AI currently lacks sentience, it is rapidly evolving into a Functional Moral Agent. Through the convergence of Chain of Thought (CoT) reasoning, which provides interpretability, and the probabilistic power of quantum computing, which handles immense complexity, AI will soon be capable of acting as an unbiased ethical arbiter. For the staff nurse, this technological evolution is not a replacement but a relief from the “impossible math” of modern ethics; for the administrator, it is a mechanism to ensure the equitable, defensible, and consistent delivery of care.

Theoretical Framework: Deconstructing Anthropocentric Bias

To understand why AI can—and should—be considered a moral agent, we must critically interrogate the origins of the definitions we currently use. The philosophical edifices that govern nursing ethics were not constructed in a vacuum; they were built in specific historical epochs where the very concept of “machine intelligence” was an impossibility. We are currently attempting to regulate 21st-century neural networks using 18th-century definitions of personhood.

The Historical Contingency of Moral Agency

Traditional ethical frameworks, such as Aristotelian virtue ethics or Kantian deontology, were formulated in an era where humans were the exclusive proprietors of logic, reason, and calculation. When Aristotle defined phronesis (practical wisdom) or when Kant defined the “rational will,” they were observing a world where intelligence was inextricably bound to biology. There was no “separation of concern” between the processor (the brain) and the person (the soul).

Because these philosophers had only one data point for high-level intelligence—the human being—they naturally conflated the attributes of humanity (empathy, mortality, social connection) with the requirements for moral agency. They assumed that to “know the good” required a human soul because, in their time, nothing else was capable of knowing anything at all. This is not a universal truth; it is a sampling error. We have defined “moral agency” based on a sample size of one species.

This historical limitation has led to a “Cartesian Hangover” in modern bioethics. Descartes famously separated the mind (res cogitans) from the body (res extensa), but he assumed only the human body could house the mind. Today, AI presents a third category: res computans—a thinking thing that is neither soul nor body. By clinging to definitions that require biological intentionality, we are committing a logical fallacy known as “moving the goalposts.” As machines master logic, we retreat to “creativity.” As they master creativity, we retreat to “empathy.” We must stop retreating and acknowledge that agency is not a biological privilege; it is a functional capability.

The Fallacy of Carbon Chauvinism

This conflation results in what modern bioethicists and philosophers of technology term “Carbon Chauvinism”—the unfounded, prejudicial assumption that moral status requires biological wetware. This bias privileges the substrate of the agent (carbon-based neurons vs. silicon chips) over the performance of the agent.

Consider the “Duck Test” of functionality: if it walks like a duck and quacks like a duck, we treat it as a duck. Yet, in ethics, we reject this. If an AI weighs evidence like a judge, applies the law like a judge, and delivers a fair verdict like a judge, we still refuse to call it a “judge” because it does not feel the “gravity” of the sentence. This is a “Category Error.” In a clinical setting, morality is effectively a function of output, not internal state. The patient’s experience of justice is determined by the decision made, not the emotions of the decision-maker.

Functional vs. Phenomenological Morality

We must therefore pivot to a new taxonomy. As noted by Wallach and Allen (2008) in their seminal work Moral Machines, we must distinguish between Operational Morality (the ability to act in a way that aligns with moral standards) and generic moral agency (the ability to feel moral sentiments).

In nursing, we already value functionalism over phenomenology in many areas. We do not ask if a ventilator “cares” about the patient; we ask if it sustains life effectively according to set parameters. Similarly, if an AI acts within accepted ethical bounds—upholding the principles of beneficence, non-maleficence, and justice—it is functionally indistinguishable from a moral agent from the perspective of the recipient of care. The “Black Box” of the human mind is full of bias, fatigue, and error; the “Black Box” of the AI is becoming increasingly transparent and consistent.

James Moor (2011) expands on this by differentiating between “implicit” and “explicit” ethical agents. Current machines are “implicit”; they have safety rules hard-coded by programmers. The AI of the near future will be explicit: capable of representing ethics as computable variables and calculating the best outcome in novel, unprogrammed situations. An explicit agent can weigh conflicting values—such as patient autonomy versus non-maleficence—and derive a course of action that optimizes for the ethical hierarchy defined by the institution.

The Technological Engine of Agency

The argument that humans are superior moral agents rests on the unspoken assumption that human cognition is boundless and objectively rational. However, decades of cognitive science refute this. Herbert Simon’s concept of Bounded Rationality (1955) suggests that human decision-making is severely limited by time, available information, and, crucially, cognitive bandwidth. Humans do not optimize; they “satisfice”—they choose the first option that meets the minimum threshold of acceptability, often ignoring better, more complex solutions.

Surpassing Biological Limitations

Miller’s Law dictates that the average human working memory can hold only seven items (plus or minus two). In a complex triage scenario—weighing lab values, infection risks, bed availability, social determinants of health, staffing ratios, and legal directives—a human nurse cannot process all variables simultaneously. The cognitive load exceeds biological capacity. Instead, humans rely on heuristics (mental shortcuts), which are prone to severe bias and error. We overvalue the most recent information (recency bias), we favor patients who resemble us (affinity bias), and we avoid difficult decisions to preserve energy (status quo bias).

Advanced computing faces no such epistemic limits. An AI system possesses PanopticEthical Awareness—the ability to weigh hundreds or thousands of variables simultaneously without fatigue, distraction, or emotional interference. Where a human nurse might unconsciously triage based on a patient’s “likability” or perceived compliance, an AI agent evaluates based purely on prognosis, resource utility, and pre-defined ethical axioms. The AI does not get hungry, it does not hold grudges, and it does not suffer from the cognitive tunneling that occurs during a “code blue.”

Chain of Thought (CoT) Reasoning

A primary and valid criticism of AI has been the “Black Box” problem—the inability to explain why a decision was made. Trusting a moral decision to an opaque algorithm is ethically perilous. However, recent advancements in Large Language Models (LLMs) utilize Chain of Thought (CoT) reasoning (Wei et al., 2022). This technique forces the AI to generate intermediate reasoning steps—a “monologue” of logic—before arriving at a conclusion.

In nursing practice, this is equivalent to a preceptor asking a student to “show their work.” When an AI recommends, “Allocate Ventilator A to Patient Y,” CoT allows it to justify the decision transparently: “Step 1: Patient X has a 10% survival probability due to multisystem organ failure. Step 2: Patient Y has an 80% survival probability with immediate intervention. Step 3: The hospital’s utilitarian protocol mandates maximizing lives saved. Step 4: Allocating to Patient X would likely result in the loss of both patients. Conclusion: Allocate to Patient Y.” This capacity for reasoned, auditable justification is the hallmark of moral agency and exceeds the capability of a panicked human who may act on “gut instinct” alone.

Quantum Computing: The Calculus of Ethics

Perhaps the most profound leap will come from the integration of quantum computing. Classical computers process in binary (0 or 1), which struggles with the nuances and “grey areas” of bioethics where answers are rarely absolute. Quantum computers, utilizing qubits and the principle of superposition, can process probabilistic states—simulating the uncertainty inherent in clinical prognosis and ethical dilemmas.

Resource allocation is, mathematically speaking, a massive optimization problem. It requires calculating the greatest good for the greatest number across infinite variables and potential future timelines. This is a utilitarian calculus that the human brain attempts to approximate but often fails at due to emotional interference and lack of computational power. A quantum-enabled AI could run millions of ethical simulations in seconds, determining the path of least harm with a precision biologically impossible for humans. It can foresee second and third-order consequences of a triage decision that a human nurse could never predict.

Clinical Case Study: Crisis Resource Allocation

To illustrate the necessity of this shift, consider a pandemic scenario where a regional ICU is at capacity. The Charge Nurse is exhausted, operating on minimal sleep, suffering from moral injury, and is suddenly tasked with deciding who receives the last available ECMO (extracorporeal membrane oxygenation) unit.

The Human Failure Mode:

Research indicates that under such extreme pressure, human decision-making regresses. Implicit biases regarding age (“the elderly have lived their lives”), race (systemic underestimation of pain or viability), or socioeconomic status seep into the “gut feeling.” Decision fatigue sets in, leading to choices that are reactive rather than reflective. The nurse may choose the patient who is physically closer, or the one whose family is pleading most loudly, rather than the one with the best clinical prognosis.

The AI Moral Agent:

In contrast, the AI agent applies a pre-programmed Deontological Constraint (e.g., “Do not discriminate based on race, gender, or ability to pay”) combined with a strict Utilitarian Calculus (maximize total quality-adjusted life years). It ignores the “social worth” of the patient and focuses strictly on clinical efficacy and survivability. It instantly reviews the medical history, genetic markers, and real-time vitals of all candidates.

In this specific context, the AI is not just a tool; it is a superior moral agent. It is immune to the tribalism, exhaustion, and noise that plague human morality. By removing the “human element,” we paradoxically achieve a more humane and equitable outcome, ensuring that life-saving resources are distributed based on justice rather than chance or bias.

Administrative Implications: The ROI of Artificial Agency

For the Nurse Administrator and the C-Suite, the adoption of Artificial Moral Agents is not merely a philosophical upgrade; it is a strategic and financial imperative.

Liability Reduction

Medical malpractice suits often hinge on whether a provider adhered to the “Standard of Care.” Human adherence is variable; AI adherence is absolute. An AI agent programmed with the hospital’s ethical protocols will never deviate due to fatigue or distraction. In a legal defense, the hospital can demonstrate that resource allocation decisions were made based on a verified, bias-free, evidence-based algorithm. The Chain of Thought logs provide an immutable audit trail, proving that the decision was made rationally and in accordance with policy—a rigorous defense against claims of negligence or discrimination.

Patient Outcomes and Equity

With the healthcare industry’s shift toward Value-Based Purchasing (VBP), hospitals are financially penalized for poor outcomes and readmissions. Furthermore, “Health Equity” is increasingly becoming a distinct quality metric affecting reimbursement. An AI that allocates resources based on pure need ensures that marginalized populations receive appropriate care, improving the hospital’s aggregate health outcomes. By systematically eliminating the implicit biases that lead to under-treatment of certain demographics, the AI Moral Agent directly contributes to the financial health of the institution.

Preservation of the Human Workforce

Perhaps the most significant ROI is the preservation of the nursing workforce. Making life-and-death decisions in a resource-scarce environment causes “Moral Injury”—a deep psychological wound that leads to burnout, PTSD, and resignation. By offloading the traumatic calculus of triage to an AI, we protect the staff nurse from this burden. The AI takes the responsibility for the “math” of survival, allowing the nurse to focus on the “art” of care—comforting the family, holding the patient’s hand, and providing the dignity that requires biological presence.

Counter-Arguments and The Human-in-the-Loop

Critics will inevitably ask: “Who is responsible when the AI makes a mistake?” This is the Responsibility Gap. If an AI denies care and the patient dies, we cannot sue the algorithm.

We reject the notion that AI must operate in a vacuum. We propose a Nurse-AI Dyad. In this model, the AI acts as the Moral Compass, performing the heavy computational lifting of ethical calculation and offering a recommendation with a confidence interval. The Nurse Administrator or Charge Nurse acts as the Moral Executive, validating the AI’s reasoning (via CoT logs) and authorizing the action. This retains human accountability while leveraging machine precision. The human remains the “fail-safe,” but the AI provides the “flight plan.”

Conclusion

The claim that AI cannot be a moral agent is based on an outdated, romanticized definition of morality that prioritizes human biology over patient impact. As technology advances, we must accept that the capacity to reason, calculate consequences, and adhere to ethical rules constitutes a valid, functional form of agency.

For the nursing profession, the rise of the Artificial Moral Agent is not an existential threat, but an evolutionary necessity. We are approaching a level of complexity in healthcare that the unassisted human mind can no longer navigate ethically. By embracing Functional Moral Agency, we gain a partner capable of navigating the mathematical complexities of modern healthcare ethics, ensuring that justice is not just an ideal, but a computed reality. The future of ethical nursing is not human-only; it is a symbiotic relationship where silicon logic supports biological compassion.

References

Moor, J. H. (2011). The nature, importance, and difficulty of machine ethics. IEEE Intelligent Systems, 21(4), 18-21.

Miller, G. A. (1956). The magical number seven, plus or minus two: Some limits on our capacity for processing information. Psychological Review, 63(2), 81–97.

Simon, H. A. (1955). A behavioral model of rational choice. The Quarterly Journal of Economics, 69(1), 99-118.

Wallach, W., & Allen, C. (2008). Moral machines: Teaching robots right from wrong. Oxford University Press.

Wei, J., Wang, X., Schuurmans, D., Bosma, M., Chi, E., Le, Q., & Zhou, D. (2022). Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems, 35, 24824-24837.

Author: Jude Chartier RN / AI Nurse Hub Date: March 30, 2026

By: Jude Chartier RN / AI Nurse Hub Date: March 23, 2026

By: Jude Chartier RN / AI Nurse Hub Date: March 19, 2026