The Economics of the Fortress: Breaking Institutional Friction in Nursing

Author: Jude Chartier RN / AI Nurse Hub Date: March 30, 2026

By: Jude Chartier RN / AI Nurse Hub

Date: February 2026

Abstract

As robotics and artificial intelligence (AI) achieve unprecedented levels of biomimicry, nursing leadership faces a critical decision regarding the aesthetic integration of these technologies into clinical environments. This article examines the tension between humanoid design—characterized by human-like gait, facial expressions, and thermal realism—and functional design, which emphasizes tool-based transparency. Utilizing the “Uncanny Valley” framework, we analyze the psychological impact of hyper-realistic robots on adult Medical-Surgical (Med-Surg) patients and nursing staff. While humanoid designs offer potential therapeutic benefits in specialized dementia and pediatric care, we argue for a functional design priority in general adult populations to preserve professional clarity and mitigate cognitive load. This expanded analysis explores the sensory implications of biomimetic interfaces, the “Night Shift Phenomenon” of robotic surveillance, the erosion of nursing professional identity, and the ethical “Right to Reality.” We conclude with specific recommendations for “Identity Transparency” protocols, including mandatory clinical “Robot Badges,” to safeguard patient autonomy and nurse well-being.

Introduction

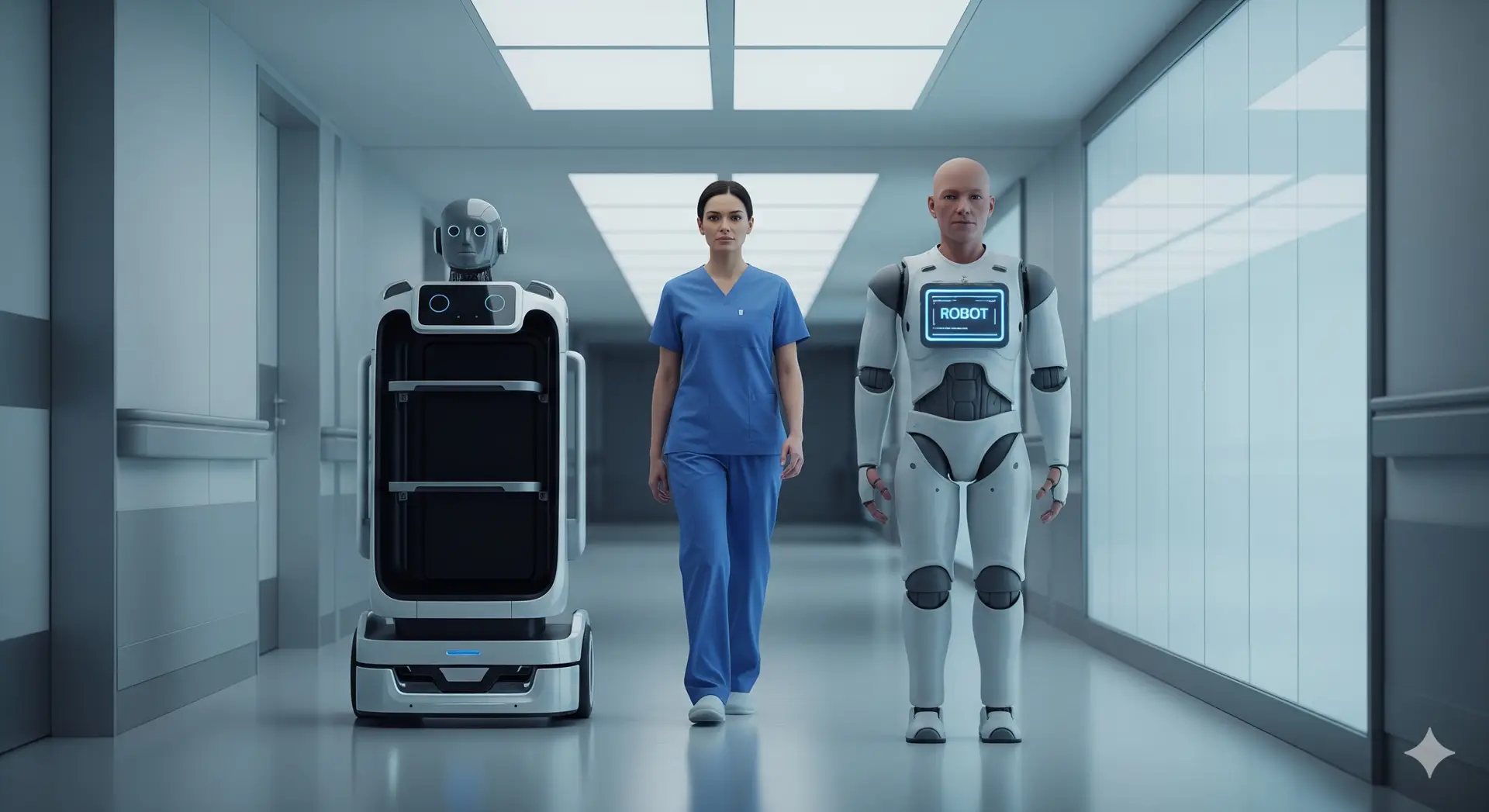

The integration of robotics into the clinical landscape is no longer a distant projection; it is an active, multi-layered evolution that is fundamentally altering the geography of the hospital ward. We have rapidly transitioned from the era of static, backend algorithms—which existed primarily on screens to assist with charting and diagnostics—to an era of “embodied intelligence.” In this new paradigm, AI takes physical form, moving within the sacred, high-pressure space of the patient-care unit. Currently, autonomous delivery systems like Moxi and specialized surgical platforms have set a successful precedent for robots as high-functioning, non-humanoid tools. However, as developers push the boundaries of materials science, soft robotics, and synthetic biology, the “silicon colleague” is beginning to look, move, and feel remarkably like a human peer.

This shift presents a profound ontological and practical dilemma for the nursing profession. As the primary coordinators of care and the final safeguard for patient safety, nurses are the most vital stakeholders in this design debate. The aesthetic of a robot is far more than a marketing choice or a matter of industrial “cool”; it is a clinical variable that influences patient trust, cognitive load, and the overall therapeutic environment. While hyper-realistic humanoid robots offer the promise of intuitive, empathetic interaction, this article explores a balanced debate, suggesting that for the general Med-Surg adult population, a functional, transparent design may be superior for maintaining professional boundaries and ensuring clinical clarity. We must ask: Does a robot that mirrors our image enhance the “human touch,” or does it merely provide a high-fidelity simulation that confuses the most vulnerable among us?

The Psychological Framework: Human-Likeness vs. Eeriness

The primary challenge in designing humanoid robots is the “Uncanny Valley,” a hypothesis first proposed by roboticist Masahiro Mori in 1970. Mori observed that as a robot’s appearance becomes more human-like, a person’s emotional response becomes increasingly positive and empathetic—until a specific point of “near-perfection” is reached. At this critical threshold, minor imperfections—a slight lag in eye tracking, a synthetic sheen to the skin, or a mechanical cadence in speech—trigger a visceral sense of revulsion, eeriness, or “creepiness” (Mori et al., 2012). This occurs because the human brain’s predictive processing mechanisms are disrupted; we expect a human but receive a machine, creating a “prediction error” that the subconscious interprets as a threat or a biological abnormality.

In a nursing context, this phenomenon is intensified by the high-stakes, high-vulnerability nature of the environment. A patient in a vulnerable state—medicated, in pain, or experiencing post-operative delirium—possesses a diminished capacity for cognitive reconciliation. When interacting with a machine that mimics human life too closely but fails to possess a human “soul” or “presence,” these patients may experience heightened distress or paranoia. The subconscious mind struggles to categorize the entity, leading to a “categorical ambiguity” that can trigger the amygdala’s fear response, potentially increasing cortisol levels and heart rate—the very physiological stressors nurses work to minimize.

Furthermore, anthropomorphism (the tendency to assign human traits to non-human entities) can be a double-edged sword. While it can build quick rapport through eye contact and nodding (Akalin et al., 2024), it also significantly increases the patient’s cognitive load. The patient’s brain must constantly work to reconcile the visual input of a “person” with the logical knowledge that they are interacting with a pre-programmed algorithm. For the adult Med-Surg patient, the mental energy required to navigate this social ambiguity is a finite resource better spent on physical recovery and health literacy. When a robot looks like a machine—a sleek, functional tool—the patient knows exactly how to interact with it: as a utility. When it looks like a person, the patient may feel an implicit social pressure to perform niceties, answer questions with social nuance, or even “worry” about the robot’s “feelings,” further draining their limited energy reserves.

The Biomimetic Breakthrough: Closing the Gap

The debate has reached a fever pitch due to recent technological milestones that were, until recently, considered pure science fiction. The debut of biomimetic robots like the Moya platform (DroidUp, 2026) has effectively bridged the physical gap between machine and man. Moya features a bipedal walking gait with 93% similarity to human biomechanics. Unlike the wheeled bases or “treads” of previous generations, Moya utilizes a sophisticated musculoskeletal system that mimics human posture, stride length, and weight distribution. This effectively eliminates the “clunky” or “gliding” movement that previously served as a clear, comforting indicator of artificiality.

Even more striking is the introduction of Thermal Realism. Moya maintains a surface body temperature within the human range of 32–36°C through a sophisticated internal heat-regulation system. The sensory and haptic implications for nursing staff are profound and historically unprecedented. Imagine a nurse, exhausted during the eleventh hour of a twelve-hour shift, accidentally brushing against a “warm” arm while reaching for supplies in a cramped patient room. This tactile realism could be startling, potentially triggering a brief moment of disorientation or a “startle reflex.” If the skin feels warm and the movement is fluid, the nurse’s “lizard brain” registers “human,” while their professional brain registers “machine.” This internal conflict, if repeated dozens of times a shift, contributes to a new form of digital-age burnout known as “cognitive dissonance fatigue.”

Realism also extends to AI-driven micro-expressions. Using high-actuator-density synthetic facial muscles, these robots can mirror human emotions in real-time—furrowing a brow in concern during a pain assessment or offering a sympathetic half-smile while delivering water. While intended to foster empathy, these features necessitate immediate, aggressive policy decisions regarding identity transparency. If a robot looks, moves, and feels like a human, the potential for “unintentional deception” is dangerously high. Within AI Nurse Hub, we propose that any robot utilizing high-fidelity biomimicry must be required to wear a standard clinical “Robot Badge” (similar in size and placement to a RN badge) or a distinctive, non-human digital identifier, such as a glowing LED status ring or a non-removable “Synthetic Entity” patch. This ensures that even if the machine mirrors a human, the patient’s right to informed consent—knowing they are being cared for by an algorithm—is protected at all times.

The Night Shift Phenomenon: Presence vs. Surveillance

A factor often overlooked by engineers and hospital administrators is the 24/7 reality of hospital life and the psychological shift that occurs during the night. A hyper-realistic humanoid robot that remains stationary in a darkened hallway or at the foot of a bed until a task is assigned can create a profound sense of unease. In a clinical unit, “presence” is usually equated with care—the comforting sight of a nurse checking a monitor. However, when that presence is artificial and hyper-realistic, it can feel like surveillance.

For nursing staff on the night shift, the “creepiness” of a silent, human-shaped entity “watching” the unit can contribute to occupational stress. There is a psychological weight to being “observed” by a humanoid form that does not breathe, blink naturally, or shift its weight. This is a manifestation of the “Panopticon effect,” where the constant, unblinking gaze of a humanoid entity creates an environment of perceived scrutiny.

Functional designs—machines that look like sleek, high-tech medical equipment—do not carry this psychological baggage. A delivery robot that looks like a rolling cabinet or a specialized cart is clearly a tool in standby mode. Conversely, a humanoid robot that looks like a person standing in a corner is a “phantom colleague,” creating an atmosphere of tension that can interfere with the nurse’s ability to focus on their patients. This “stalking” effect must be mitigated through design—perhaps by ensuring robots return to “docking stations” that clearly signal their “off” status to anyone walking by, or by utilizing “non-humanoid sleep postures” that break the silhouette of the human form when the robot is inactive.

Contextual Design: Pediatrics and Dementia Care

While functional design is prioritized for the Med-Surg adult, the debate shifts when considering specialized populations where emotional regulation and sensory comfort are primary clinical goals. In Pediatrics, humanoid or zoomorphic (animal-like) robots have shown immense success in reducing procedural anxiety. The “human touch”—even when simulated by a warm, expressive robot—can provide a sensory distraction that a cold, mechanical tool cannot. Children often engage in “imaginative play” and are more willing to accept a robot as a companion or “brave friend,” which can be leveraged to explain upcoming procedures, encourage physical therapy, or simply provide comfort during parental absence.

In Dementia Care, the use of realistic humanoid robots presents a complex ethical paradox. For some patients experiencing profound social isolation and the “sundowning” effect, a humanoid robot provides a sense of “presence” that mitigates loneliness and agitation. A warm, bipedal robot that can walk alongside a patient in a memory care unit may provide more comfort than a screen or a tablet.

However, this realism may exacerbate confusion for others, leading to an ethical crisis regarding the “Right to Reality.” If a patient with cognitive decline believes a humanoid robot is a human staff member or a long-lost relative, they may attempt to engage in complex social interactions—such as sharing a secret, asking for emotional advice, or seeking genuine human validation—that the robot’s current Large Language Models (LLMs) cannot truly fulfill. This leads to a breakdown of trust and potential agitation when the “person” suddenly repeats a programmed phrase or fails to respond to a nuanced emotional cue. The “therapeutic lie” of a humanoid robot must be weighed against the patient’s dignity. Nurses must act as the ultimate “truth-tellers,” ensuring that the use of humanoid AI does not devolve into a form of high-tech deception that treats the patient as a subject of an experiment rather than a person with a right to know their environment.

Professional Identity and the “Replacement” Anxiety

The move toward humanoid robots also touches upon deep-seated “replacement anxiety” within the nursing profession. When a robot is designed to look exactly like a nurse—wearing scrubs, walking with a human gait, and mimicking “nurse-like” expressions—it sends a subconscious message to both the staff and the public that the nurse’s physical form is replicable and that their presence is merely a set of programmable behaviors. Functional design, conversely, emphasizes augmentation. If the robot looks like a specialized delivery device or a sleek diagnostic station, it is clearly a tool that helps the nurse do their job more efficiently.

If the robot looks like a human, the lines between “the professional” and “the tool” become dangerously blurred. This can lead to a devaluation of nursing’s “emotional labor.” If a hospital administrator sees a robot “providing empathy” with its programmed micro-expressions and warm skin, they may mistakenly believe that the human element of nursing is a luxury or a “soft skill” that can be automated, rather than a clinical necessity rooted in human intuition and lived experience. By advocating for functional designs in general care, nurses protect their professional identity as the irreplaceable human link in the healthcare chain. We must define the robot as an extension of our capability, not a replacement of our presence.

Recommendations for Nursing Leadership

As AI and robotics continue to advance at an exponential rate, nursing leadership must advocate for design standards that prioritize clinical outcomes and staff well-being over aesthetic “novelty.” We recommend the following evidence-based strategies:

Conclusion

The goal of healthcare robotics is not to replace the human touch, but to protect and amplify it. By choosing designs that are clearly artificial yet functionally superior, we allow the nurse to remain the “human” heart of the clinical team. We must resist the urge to build robots in our image simply because we now possess the technical capability to do so. Instead, we should build them in the image of the sophisticated, reliable tools they are.

In the hospital of the future, the most effective robot is not the one that successfully pretends to be a nurse, but the one that serves the patient while wearing a badge that proudly declares its identity as a tool of the trade. By maintaining this clear distinction, we ensure that when a patient reaches out for a hand to hold in their moment of greatest need, they are reaching for a hand that has a pulse, a history, and a soul. The future of nursing is not human vs. machine; it is the human nurse, empowered by machines that know their place.

References

Akalin, N., et al. (2024). Enhancing human-robot interaction in healthcare: A study on nonverbal communication cues and trust dynamics. arXiv:2503.16469v1.

American Nurses Association. (2022). The ethical use of artificial intelligence in nursing practice. https://www.nursingworld.org

DroidUp. (2026). Moya: The world’s first biomimetic AI robot with fully embodied intelligence. [Technical Release].

Frontiers in Digital Health. (2025). A GPT-reinforced social robot for patient communication: A pilot study. Frontiers in Digital Health, 7. https://www.google.com/search?q=https://doi.org/10.3389/fdgth.2025.1653168

Mori, M., MacDorman, K. F., & Kageki, N. (2012). The uncanny valley [From the Field]. IEEE Robotics & Automation Magazine, 19(2), 98-100. (Original work published 1970).

Utilisation of robots in nursing practice: An umbrella review. (2024). PMC – PubMed Central. https://pmc.ncbi.nlm.nih.gov/articles/PMC11881500/

Author: Jude Chartier RN / AI Nurse Hub Date: March 30, 2026

By: Jude Chartier RN / AI Nurse Hub Date: March 23, 2026

By: Jude Chartier RN / AI Nurse Hub Date: March 19, 2026