By: Jude Chartier RN / AI Nurse Hub

Date: January 26, 2026

Abstract

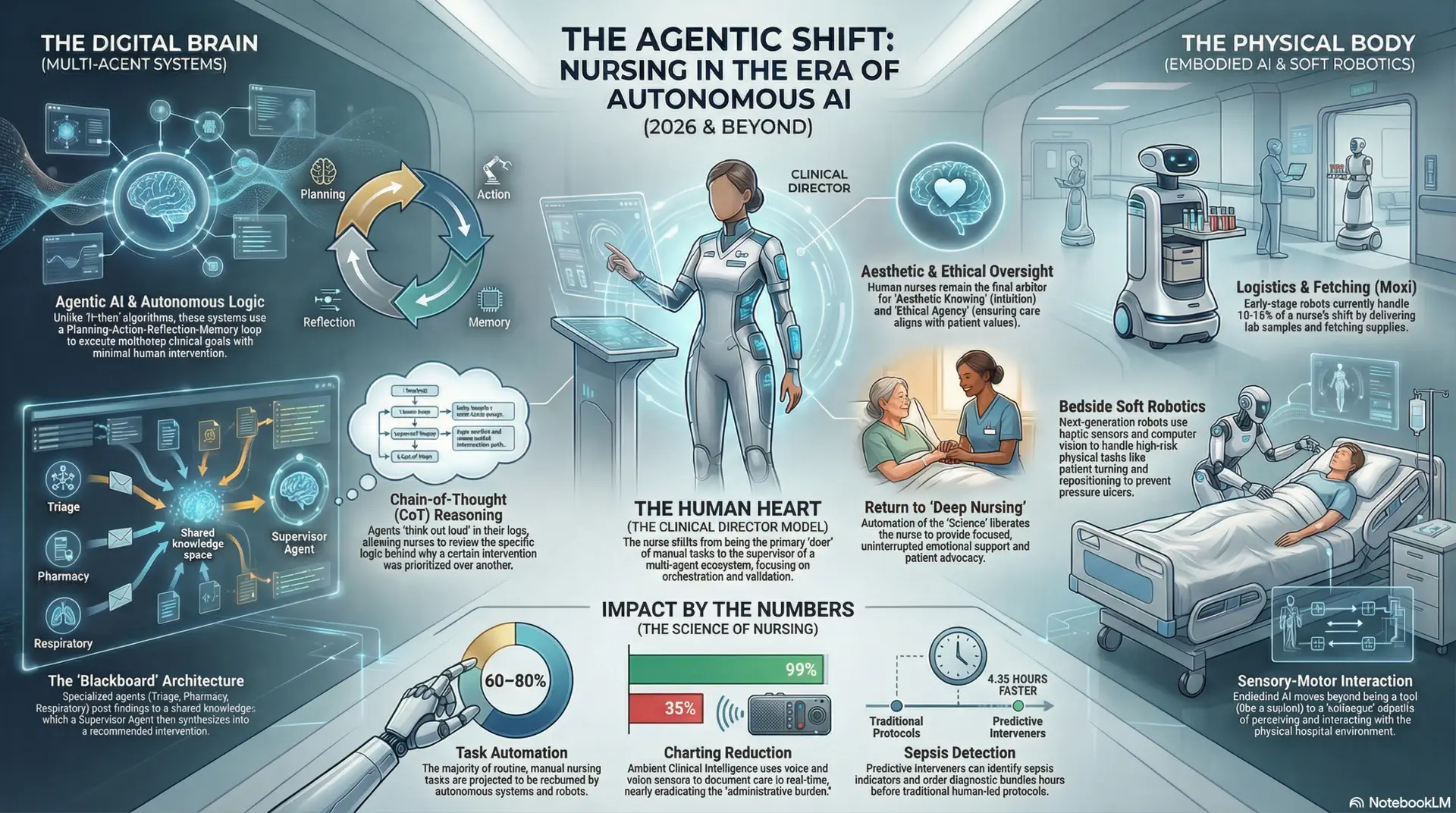

The healthcare sector of 2026 is characterized by a fundamental decoupling of nursing from manual, repetitive bedside labor. This transition is driven by the convergence of two technological pillars: agentic AI documentation systems and socially aware humanoid robotics. This article examines the operational impact of mass-produced general-purpose humanoids—such as the AgiBot A2 and Unitree G1—which have transitioned from laboratory prototypes to bedside clinical partners. By leveraging NVIDIA’s Isaac GR00T reasoning platform and multimodal emotion recognition, these machines now simulate complex social awareness and empathy. Consequently, the role of the professional nurse has evolved into that of a “Strategic Authenticator,” where clinical expertise is redirected toward the oversight of robotic agents, the interpretation of AI-generated care plans, and the preservation of high-level patient advocacy.

The 2026 Paradigm Shift: From Task-Based to Strategic Nursing

For decades, the nursing profession was defined by a high volume of physical tasks and administrative documentation, leading to widespread burnout, moral injury, and chronic staffing crises. However, the arrival of the “General-Purpose” robotic breakthrough in early 2026 has fundamentally altered this trajectory. As highlighted in the CES 2026 Robotics Recap, the industry has shifted from niche, task-specific machines—which were often relegated to simple delivery duties—to versatile humanoids capable of navigating the unstructured and dynamic environments of acute care (Counterpoint Research, 2026).

This shift represents more than just technical progress; it is a move toward “Strategic Clinical Orchestration.” In this model, the nurse is no longer the primary executor of repetitive physical labor, such as supply logistics, bariatric repositioning, or the constant retrieval of supplies. Instead, the nurse acts as a high-level overseer, utilizing humanoid robots as the “physical interface” for a hospital-wide artificial intelligence (AI) ecosystem. The psychological burden shifts from physical fatigue to cognitive management, as the nurse orchestrates a team of robotic agents to ensure that the baseline needs of the patient are met with mathematical precision, allowing the human provider to focus on the nuances of complex clinical management.

The Invisible Infrastructure: Agentic AI and Documentation Recovery

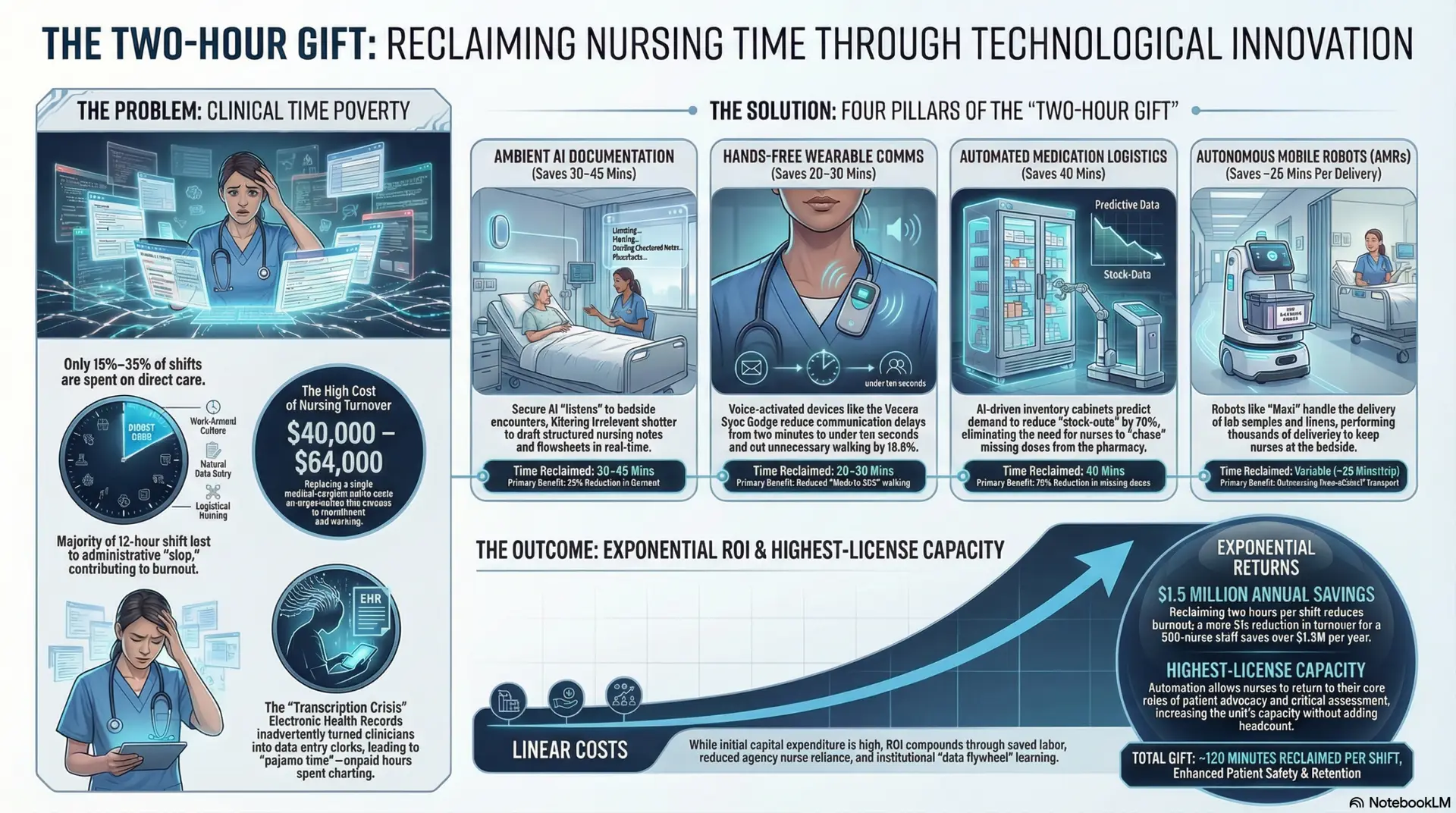

Before addressing the physical presence of robots, one must understand the “Invisible Infrastructure” that supports them. The implementation of the MedLM Nurse Handoff application by HCA Healthcare and Google Cloud has set a benchmark for time reclamation. By automating the ingestion of vitals, lab trends, and nursing notes into structured, predictive reports, this system has reclaimed an estimated 10 million hours of nursing time annually across 190 hospitals (Google Cloud, 2025).

Crucially, this system is “nurse-led,” meaning the underlying algorithms were trained to prioritize nurse-sensitive indicators—such as skin integrity, fall risk, and IV line patency—over generic medical summaries. In practice, the AI continuously monitors the EHR, identifying subtle changes that might escape a busy clinician. For example, if a patient’s urine output decreases slightly while their respiratory rate climbs, the agentic system flags this as a potential early warning of fluid overload or sepsis. This allows the nurse to transition from a “data entry clerk” to a “verified editor,” where their primary function is to authenticate the AI’s synthesis of patient data before it becomes part of the permanent legal record (AHA, 2025). This oversight ensures that while the AI performs the heavy lifting of data synthesis, the human nurse remains the final arbiter of truth.

Social Awareness and the Mimicry of Human Feeling

The most profound breakthrough in 2026 is the development of “Socially Aware” robotics. Machines like the Fourier GR-3 and AgiBot A2 are no longer mere mechanical tools; they are programmed with Type III Empathic Interaction capabilities, designed to bridge the emotional gap between nurse visits. This is achieved through three integrated technologies that simulate human-like situational awareness:

- Multimodal Emotion Recognition (MER): Using high-resolution computer vision and vocal pattern analysis, robots can detect micro-expressions of pain, anxiety, or distress in real-time. By processing changes in pupil dilation, facial tension, and vocal pitch, the robot can infer a patient’s emotional state with high sensitivity, often identifying distress before the patient explicitly expresses it.

- Neuromorphic Computing: This “brain-inspired” processing allows robots to mirror human expressions—a concept known as motor mimicry. For instance, if a patient smiles, the robot provides a subtle, warm facial response; if a patient expresses pain, the robot adopts a concerned, attentive posture. This establishes a subconscious rapport, activating the patient’s mirror neurons and fostering a sense of being “seen” and understood, which is critical for reducing patient anxiety in isolation.

- Contextual Reasoning: Utilizing the NVIDIA Isaac GR00T N1.6 platform, robots can “reason” through a patient’s emotional state based on their clinical context. A robot can distinguish between a patient’s restlessness due to acute post-operative pain—requiring clinical intervention—versus restlessness due to hospital-induced delirium or simple loneliness (Counterpoint Research, 2026). This allows the robot to offer the appropriate level of interaction, whether it be a soothing conversation, playing music, or immediately escalating the situation to the nursing station.

By mimicking feelings of empathy and understanding, these robots provide “social maintenance” during the long periods when nurses are engaged in higher-level tasks. This does not replace human empathy but ensures that the patient is never truly unobserved or emotionally neglected.

The Nurse as Strategic Authenticator

As humanoid robots handle the physical “lifting and running,” the nurse’s role evolves into that of a “Strategic Authenticator.” In this capacity, the nurse manages a pod of robotic agents, each acting as a distributed sensor and data hub at the patient’s bedside. Every interaction the humanoid has—from assisting with mobility exercises to providing social engagement—is captured as a clinical data point.

The nurse utilizes an AI-augmented dashboard—a command center of sorts—to synthesize this incoming information. For instance, if a robot detects a subtle change in a patient’s gait during a walk or a slight increase in vocal agitation during a social interaction, the AI “nudges” the nurse with a predictive alert. The nurse must then evaluate these alerts: Is the gait change a sign of muscle fatigue, or is it a neurological “red flag”?

The nurse’s professional mandate is to authenticate these interactions. They must validate the robot’s “sensing” with their own clinical intuition and historical knowledge of the patient, ensuring that the technology acts as a guardrail rather than an autonomous decision-maker. This allows the “Golden Hours” reclaimed from manual labor to be reinvested into complex clinical titration, family coordination, and ethical advocacy—areas where human intuition and the ability to “read between the lines” remain irreplaceable.

Ethical Challenges: The Liability Double-Bind and Staffing Ratios

Despite the operational benefits, the implementation of “Robot-Augmented Wards” faces significant ethical and organizational hurdles. Nurses express a persistent and valid fear of the “Efficiency Trap”—the concern that hospital administration will use the time reclaimed by robots to justify an increase in nurse-to-patient ratios (Simbo AI, 2026). If a nurse’s administrative and physical burden is halved, there is an institutional temptation to double their patient load, effectively neutralizing the safety and wellness benefits of the technology and returning the workforce to a state of high-pressure burnout.

Furthermore, a “Liability Double-Bind” exists that complicates the nurse-tech relationship. Because software and robotic agents do not hold professional licenses, the legal and ethical responsibility for patient outcomes remains solely with the nurse. If an AI summary contains a “hallucination”—a 14% occurrence rate in early 2025 pilots—and the nurse authenticates it without catching the error, the professional burden rests on the nurse’s license (NCBI, 2025). This necessitates a robust Human-Robot Collaboration (HRC) framework where nurses are not just users of technology, but the formal “Commanders” of the robotic fleet, with the clear authority to override any AI suggestion or robotic action based on clinical judgment.

Conclusion: A New Era of Intelligent Caring

In 2026, the best nurse is not the one who works the hardest physically; it is the one who strategically deploys technology to ensure the most precise and compassionate patient outcome. Humanoid robots, with their burgeoning ability to mimic feeling and handle physical drudgery, have protected the “human touch” by removing the exhaustion and administrative clutter that once obscured it. As the profession moves forward, the nurse remains the final authority on clinical judgment, acting as the vital link between high-tech robotic precision and high-touch human compassion. The future of nursing is not the replacement of the human, but the amplification of the human’s unique capacity for wisdom and advocacy through the power of embodied AI.

References

American Hospital Association (AHA). (2025). Smarter, safer hospitals: How HCA Healthcare is using AI to redefine patient safety. https://www.aha.org/news/blog/2025-10-16-smarter-safer-hospitals-how-hca-healthcare-using-ai-redefine-patient-safety

Counterpoint Research. (2026). CES 2026 robotics announcements recap: The rise of general-purpose humanoids. https://counterpointresearch.com/en/insights/ces-2026-robotics-announcements-recap

Google Cloud. (2025). How nurses are charting the future of AI at America’s largest hospital network. https://cloud.google.com/transform/nurse-handoff-ai-chart-app-hca-healthcare-better-patient-outcomes

National Center for Biotechnology Information (NCBI). (2025). 2025 watch list: Artificial intelligence in health care and nursing liability. https://www.ncbi.nlm.nih.gov/books/NBK613808/

Simbo AI. (2026, January). The role of A.I. in transforming nursing workloads and staffing ratios. https://www.google.com/search?q=https://www.simbo.ai/blog/the-role-of-a-i-in-transforming-nursing-workloads-and-staffing-ratios

SullivanCotter. (2026, January 6). How AI will shape the future of health care in 2026. https://sullivancotter.com/ai-and-the-future-of-health-care/